Highlights Of Experimentation: Q1 CCC Days

Becky Passner

Every quarter, the RoleModel team sets aside regular project work to ask a different kind of question: how could we build better? Not better in theory. Better in the ways that show up in the work we do with our partners. This quarter, five teams ran real experiments. The results were mixed. That was the point.

SHARE POST

Every quarter, RoleModel steps back from active project work for two days to focus on one question: how could we build better? We call these CCC days our quarterly investment in craft.

The experiments share a focus: can an emerging technology earn a place in real-world project work, or can we sharpen an existing tool to better serve our partners? This quarter, we had both.

Design System Aware Code Generation

AI tools don't automatically understand your design system or the standards your team has spent years developing. That gap is the real challenge, not the technology itself.

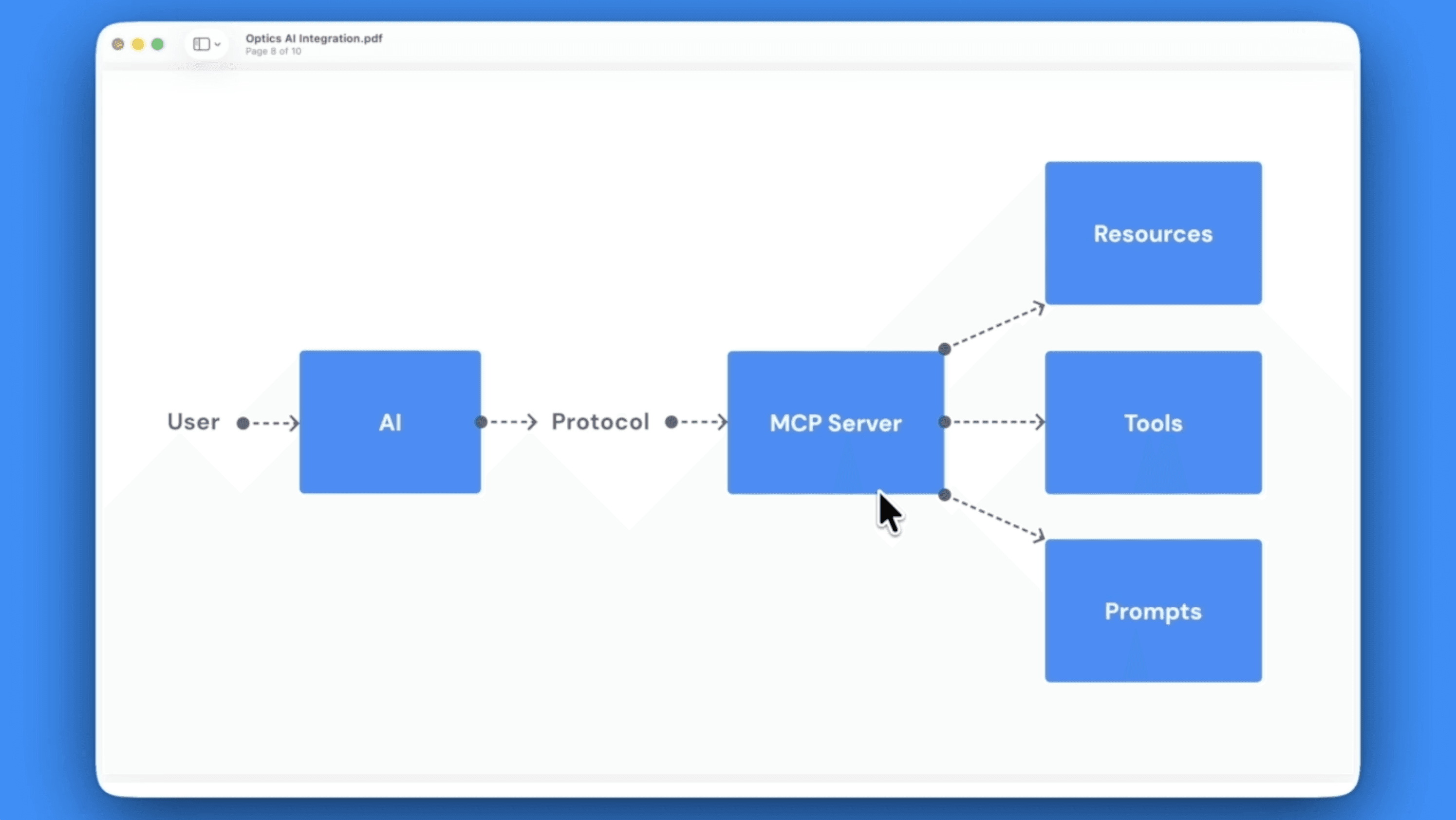

During CCC days, two teams tackled the same problem from different angles. One built skills files: markdown documents that give AI agents the context they need to produce work that fits how we build. The other restructured our MCP server, a protocol that connects AI tools to resources, executable actions, and reusable workflow prompts.

The initial runs required adjustment, but the final results were strong: HTML and CSS generated in line with Optics, our design system, and BEM standards, in a fraction of the usual time. Every skill file added to the repository is expertise made permanent. The next project doesn't start from scratch; it starts from everything we've already learned.

LightningCAD.app

LightningCAD is built for the browser. That's a strength, and it's also a ceiling. Heavy computation shares resources with the UI, memory is capped by the browser runtime, and the most demanding use cases start running into those limits.

This experiment explored a different approach: wrapping LightningCAD in Tauri, a desktop framework that keeps the familiar browser-based UI but moves the expensive work into a native backend with direct access to memory and CPU. Lower memory usage, faster execution, and performance that doesn't degrade when the models get heavy.

To test it, we called into the native backend using a GDAL library to run complex geometry calculations while the UI stayed fully responsive.

The experiment proved the path is real. For partners with demanding workloads, that means LightningCAD is no longer bound by what a browser tab can hold. Next up: exploring how much of the app we can make available offline by running a local server alongside the desktop wrapper.

Improving GitHub Copilot Instructions with AI

AI can generate working code fast. The problem is that fast and good aren't the same thing. Left to its own devices, AI produces code that's inefficient, hard to maintain, and falls short of the standards we hold our developers to.

So we asked a different question: what if we could teach AI our patterns?

During CCC days, we built a tracking application using GitHub Copilot agents, then manually refactored the output. That refactored code became the source material for extracting skills files that encode RoleModel's known-good patterns. When applied to the same codebase, the output was cleaner, more maintainable, and closer to what we'd ship in a real project. We also used those skills to build an application for a real partner. Without the skills, it would have been demo-worthy at best. With them, it became something that could be built.

The skills are human-readable, serving double duty: guiding AI output and documenting our best practices for the team. That frees our developers to focus on the complex problems AI still can't solve: the work that actually requires judgment.

AI Delegation

Handing off tasks to agents isn't new to us. What we wanted to learn was which tasks are the right fit, and how to tighten our guardrails so agents produce better output with fewer iterations.

We ran three experiments: tracking down a bug, cleaning up inconsistent code documentation, and clearing a backlog of style and formatting issues. Results were mixed in the most instructive way. The bug got fixed, though it took more back-and-forth than expected. The documentation work stalled, but it led directly to a written guide teaching the agent how we want that work done in the future. The formatting agent ran for an hour, got stuck on a decision that required real judgment, and eventually had to be restarted with better instructions. The second attempt went much better.

The pattern that emerged: when an agent struggles, starting over with a clearer prompt usually beats trying to steer it mid-course.

Where agents show the most promise is in the small, repetitive work that rarely gets prioritized. Easy to hand off, hard to justify scheduling. That's where the team plans to keep exploring.

Open Source WebComponent PDF viewer

The tools that should exist don't always exist. That's where this experiment started.

While working on a recent partner project, our team needed a good option for displaying PDFs inside a web application. PDF.js is the clear leader as an open-source foundational library, but there are no good web components built around it. The two we found were outdated and difficult to work with. So during CCC days, we built our own and shipped it.

The developers on this project were experienced, but building web components wasn't their specialty. What made the difference was collaboration: drawing on expertise from across the RoleModel team and using AI to research options and suggest next steps. The result is an open-source PDF viewer that any developer can drop into their application with a single line of code. It covers the features you'd expect: zooming, printing, page navigation, text search, and theme customization, plus a few you might not, like a collapsible thumbnail sidebar and full keyboard shortcuts.

From idea to published package in two days. For our partners, that means tools that should exist actually get built.

What We're Taking Forward

Not every experiment ends with shipped code. Some produce learning that shapes how we work next. This quarter, we had both: a PDF viewer now in use on a partner project, a viable path to a desktop LightningCAD, a growing skills repository that makes every project start from a stronger position, and clearer patterns for where AI delegation actually earns its keep.

That's what CCC days are for. We don't adopt new tools because they're new. We adopt them when we've seen them produce better outcomes for our partners. The experiments that involved AI were no different: the value wasn't the technology, it was what experienced developers could do with it.